How Agents Actually Get Work Done

Once an agent has a plan, the next question is what happens when it starts

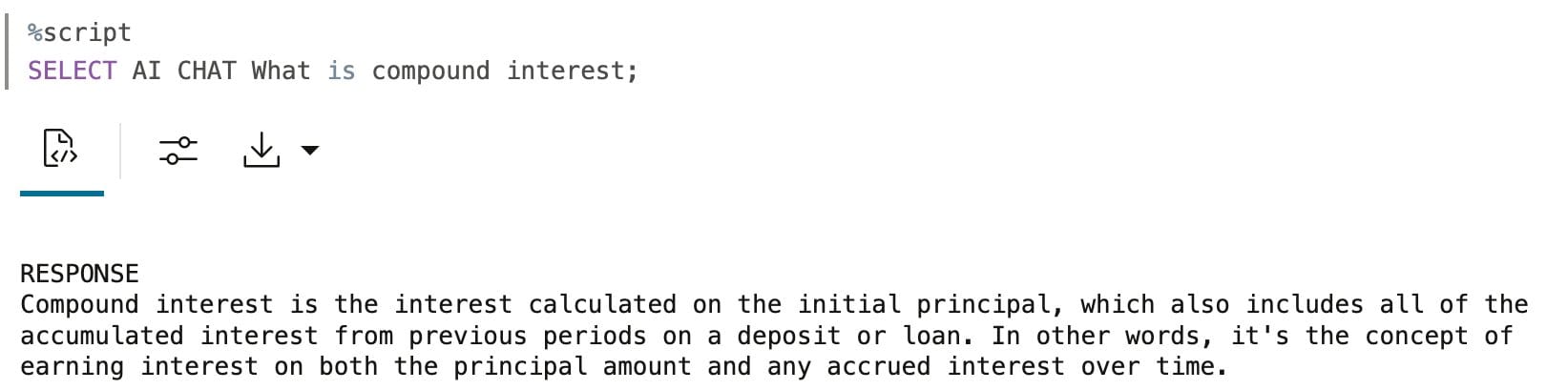

Most people's experience with AI starts with a simple prompt. You type a question, the model gives you an answer, and that's the end of it. That interaction pattern is often called a zero-shot prompt, meaning you ask a single question without providing examples, instructions, or prior context.

That works well for a lot of things.

The issue shows up when you need more than an answer and actually want something to get done. This post focuses on that gap and why the agent pattern exists in the first place.

Zero-shot prompting works best when the goal is understanding rather than execution. In those cases, you want a response you can read and act on yourself.

It's especially useful for things like brainstorming ideas, explaining concepts, drafting text, or answering one-off questions. Chat-style AI feels immediately helpful here because the interaction is simple: you ask something, you get something back, and you move on.

The trouble starts when the task spans more than one step.

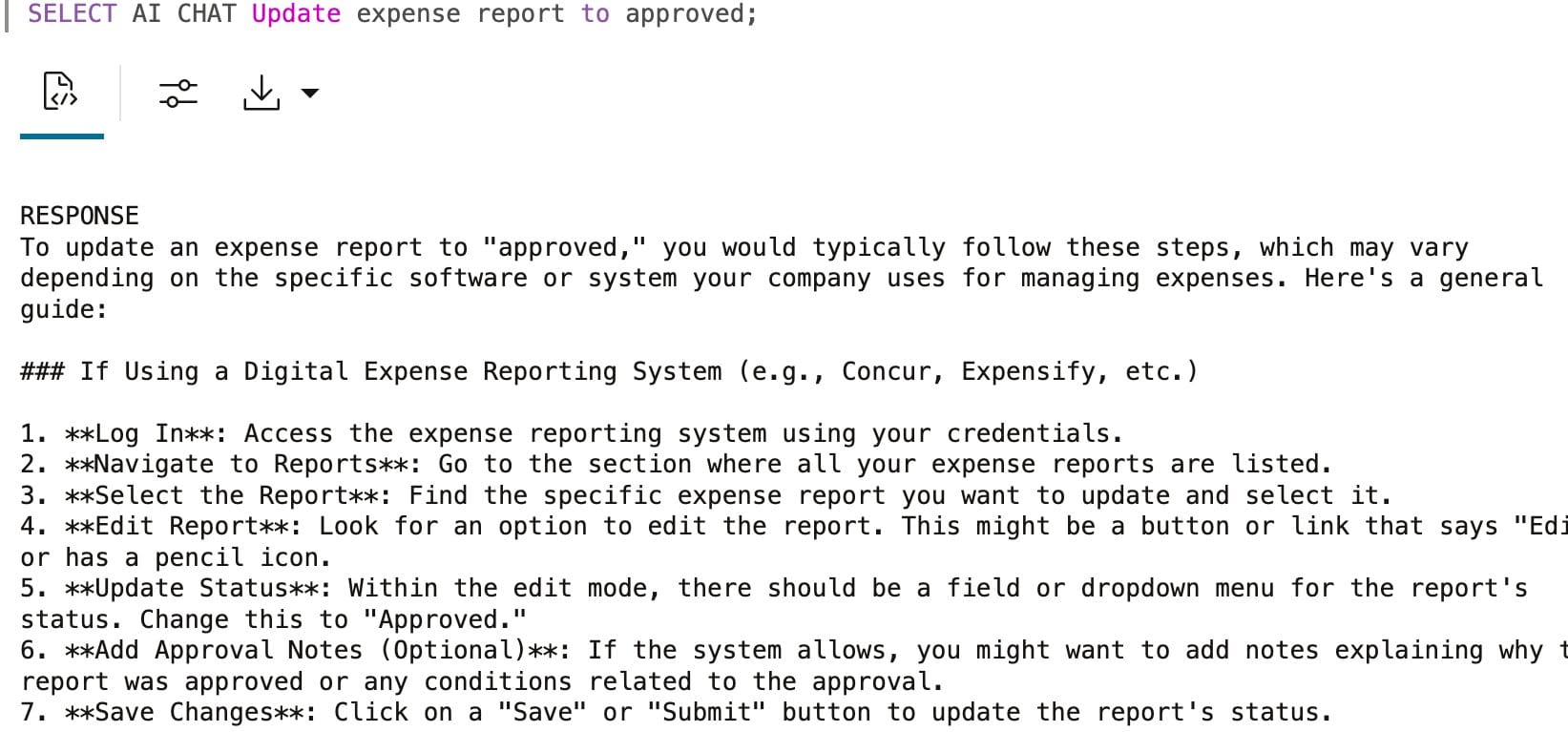

Consider something simple like approving an expense report. A zero-shot response might explain what to check, which policy applies, when approval is required, and what exceptions look like. That information can be correct and still incomplete in practice.

None of the work actually gets done. You still have to look up the report, verify the amounts, apply the policy, and record the decision. The model explains the process, but execution is still manual. This is fine until these tasks start repeating.

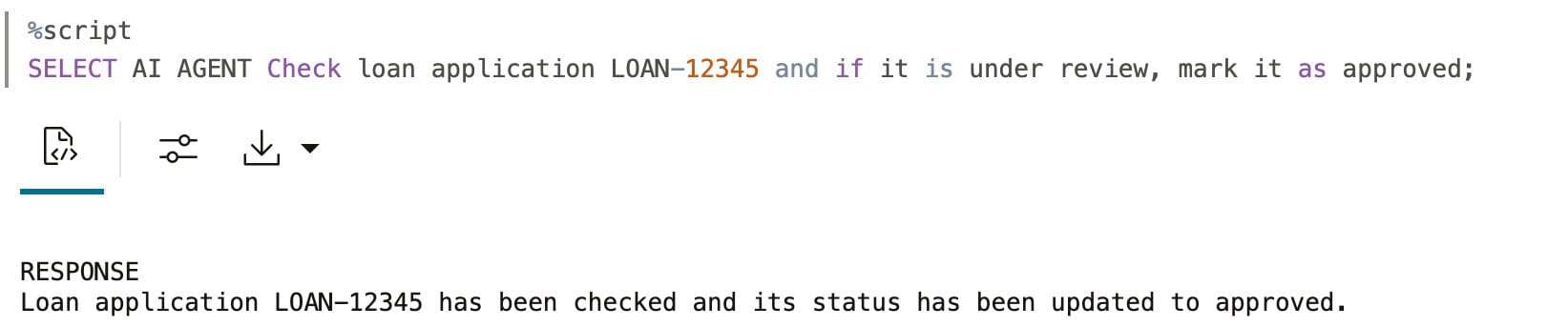

Agents exist to close that gap between explanation and execution.

Instead of returning a list of steps, an agent is allowed to take responsibility for the work itself. It breaks the task into actions, calls the right tools, checks results as it goes, and stops or escalates when needed.

The difference here isn't intelligence. It's responsibility. An agent is designed to do the work, not just describe it.

The easiest way to see the difference is to compare capabilities directly.

| Capability | Zero-Shot Prompt | Agent |

|---|---|---|

| Understands intent | Yes | Yes |

| Explains steps | Yes | Yes |

| Executes actions | No | Yes |

| Handles multi-step work | Poorly | Well |

| Tracks progress | No | Yes |

| Applies policy consistently | No | Yes |

Zero-shot prompting is good at answering questions. Agents are built to handle tasks end to end.

In small demos, the difference doesn't feel important. Outside of a demo, the friction adds up fast. Humans repeat the same steps, policies get applied inconsistently, context gets lost between actions, and nothing improves over time.

Agents become useful once tasks repeat, rules matter, outcomes need to be tracked, and systems need to reflect what actually happened. This is usually the point where people stop asking for "better prompts" and start needing a different pattern.

It's worth being explicit about this. If a task is one-off, doesn't touch real systems, doesn't need consistency, and doesn't require follow-through, a prompt is often enough.

Agents earn their place when work needs to move from suggestion to completion.

Lab 2: Prompt vs Agent (~10 minutes)

This lab walks through the same task using a zero-shot prompt and then using an agent so you can see where execution changes the outcome.

Once you let agents take responsibility for execution, the next question becomes how they decide what to do first. We cover that in How Agents Plan the Work.

This is part of the AI Agents Series. Previously: What Is an AI Agent, Really?.