Why Agents Need Memory

One of the first things people notice when they move from demos to real usage

One of the first things people notice when they move from demos to real usage is that most agents don't remember anything. Each interaction starts fresh. Once a session ends, whatever happened before is gone.

That might not matter in a quick demo, but it becomes a real problem once an agent is expected to help with ongoing work.

Here's a situation that comes up more often than people expect.

On Monday, a customer contacts support about a billing issue. The agent investigates, figures out what went wrong, and starts a correction. It takes some time, but progress is made.

On Tuesday, the same customer comes back to ask for an update. The agent responds by asking them to explain the issue again.

Nothing is broken. The agent is doing exactly what it was designed to do. It simply has no access to what happened before. The real problem isn't that the agent is slow, but that it never improves.

In a demo, starting from scratch every time feels fine. Outside of a demo, the friction adds up fast.

Without memory, agents end up repeating work and losing context between sessions, which means decisions are harder to explain and humans end up doing the same reasoning again.

Once an agent is involved in anything that spans more than a single interaction, memory stops being optional.

Some systems store chat history, which helps with tone and conversational continuity. That's useful, but it's not the same thing as remembering work. The distinction matters once an agent is expected to do more than have a conversation.

| Chat Memory | Agent Memory |

|---|---|

| Preferences and tone | Decisions and outcomes |

| Conversation history | Workflow state |

| Corrections to phrasing | Exceptions that were made |

| Short-lived context | Long-lived facts |

Chat memory personalizes interaction. Agent memory preserves what actually happened.

When an agent can remember, work doesn't reset between sessions. Exceptions carry forward, similar situations get handled more consistently, and past outcomes shape future decisions.

Without memory, every interaction is isolated. With memory, work starts to accumulate.

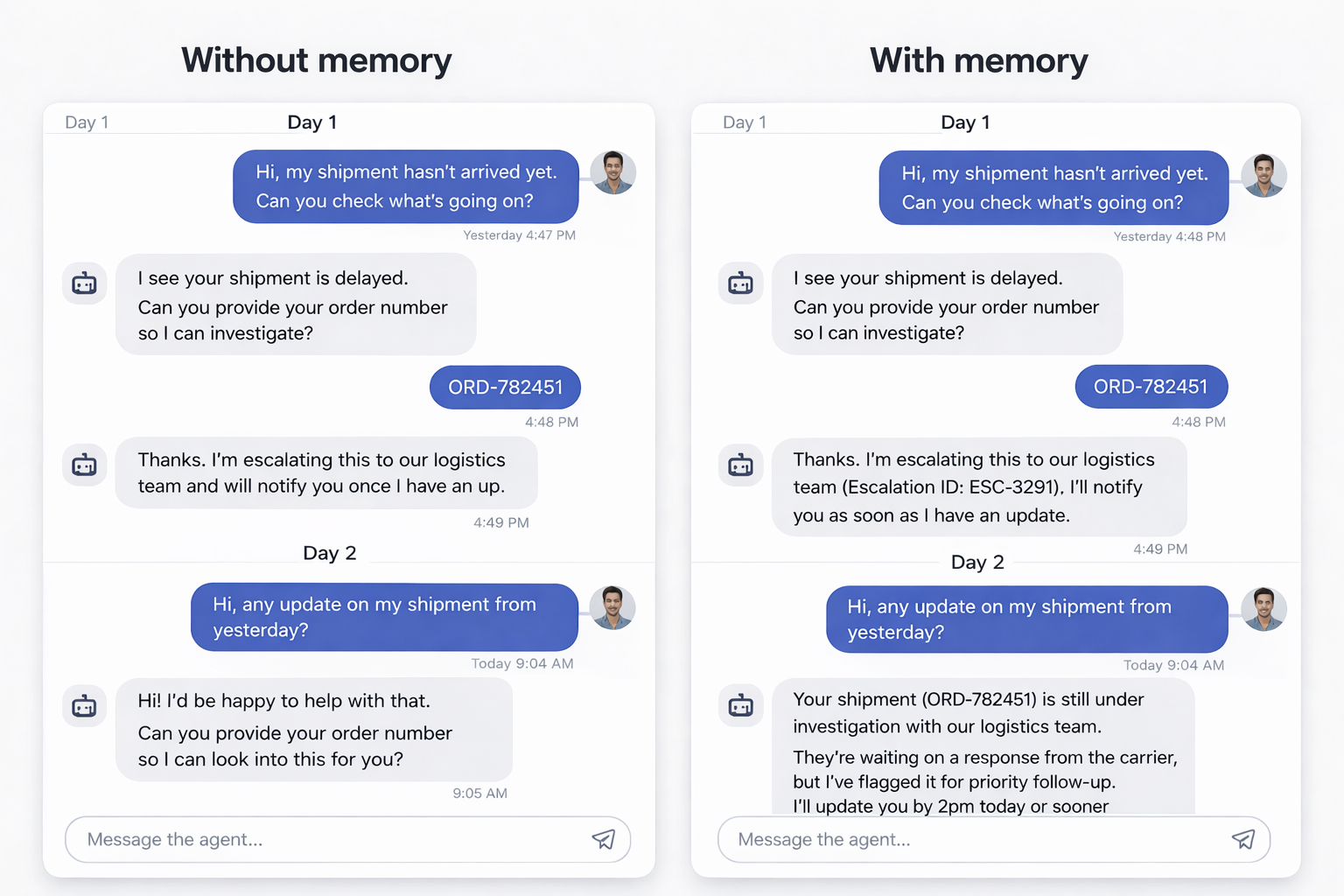

Without memory, a customer asks about a delayed shipment. The agent investigates and escalates the issue. The next day, the customer asks for an update, and the agent starts over.

With memory, the agent recognizes the issue, checks the existing escalation, and gives a current status update. Same capability, but a very different experience.

An agent makes decisions based on what it remembers. If that memory is hard to inspect or understand, the agent's behavior becomes hard to explain.

This is why memory should be treated as part of the system, not an afterthought.

Not everything should be remembered forever. Useful agent memory usually falls into a few categories: facts that should persist across sessions, decisions that were made and why, exceptions that changed normal behavior, and outcomes that should influence future work.

Storing these deliberately is what allows agents to feel consistent instead of repetitive.

Lab 5: Experience the Memory Gap (~10 minutes)

This lab shows how an agent behaves without memory and why that matters for real work.

Memory gives agents continuity, but memory alone isn't enough. In the next post, we look at what happens when agents don't have access to your actual business data.

This is part of the AI Agents Series. Previously: How Agents Actually Get Work Done.